How to Choose the Right AI Visibility Tool: A Strategic Framework for Enterprise Teams

Updated by

Updated on Feb 27, 2026

Introduction: The Measurement Gap in AI-First Search

You're solving a visibility crisis that most enterprises haven't fully acknowledged yet. Traditional SEO dashboards show stable keyword rankings while your brand disappears from the conversations that actually drive decisions. When prospects ask ChatGPT, Perplexity, or Gemini about solutions in your category, you have no systematic way to know what these systems say—or whether they mention you at all.

This tutorial addresses the evaluation and procurement challenge facing marketing leaders in 2026: how to select an ai search visibility tracking tools infrastructure that captures your brand's presence across generative engines without creating data silos or operational overhead. The framework applies whether you're managing a single B2B SaaS product or a portfolio of enterprise brands.

Who this is for: CMOs, VPs of Marketing, and SEO directors at mid-market to enterprise companies who need to operationalize AI search visibility monitoring within 90 days.

TL;DR: Decision Framework at a Glance

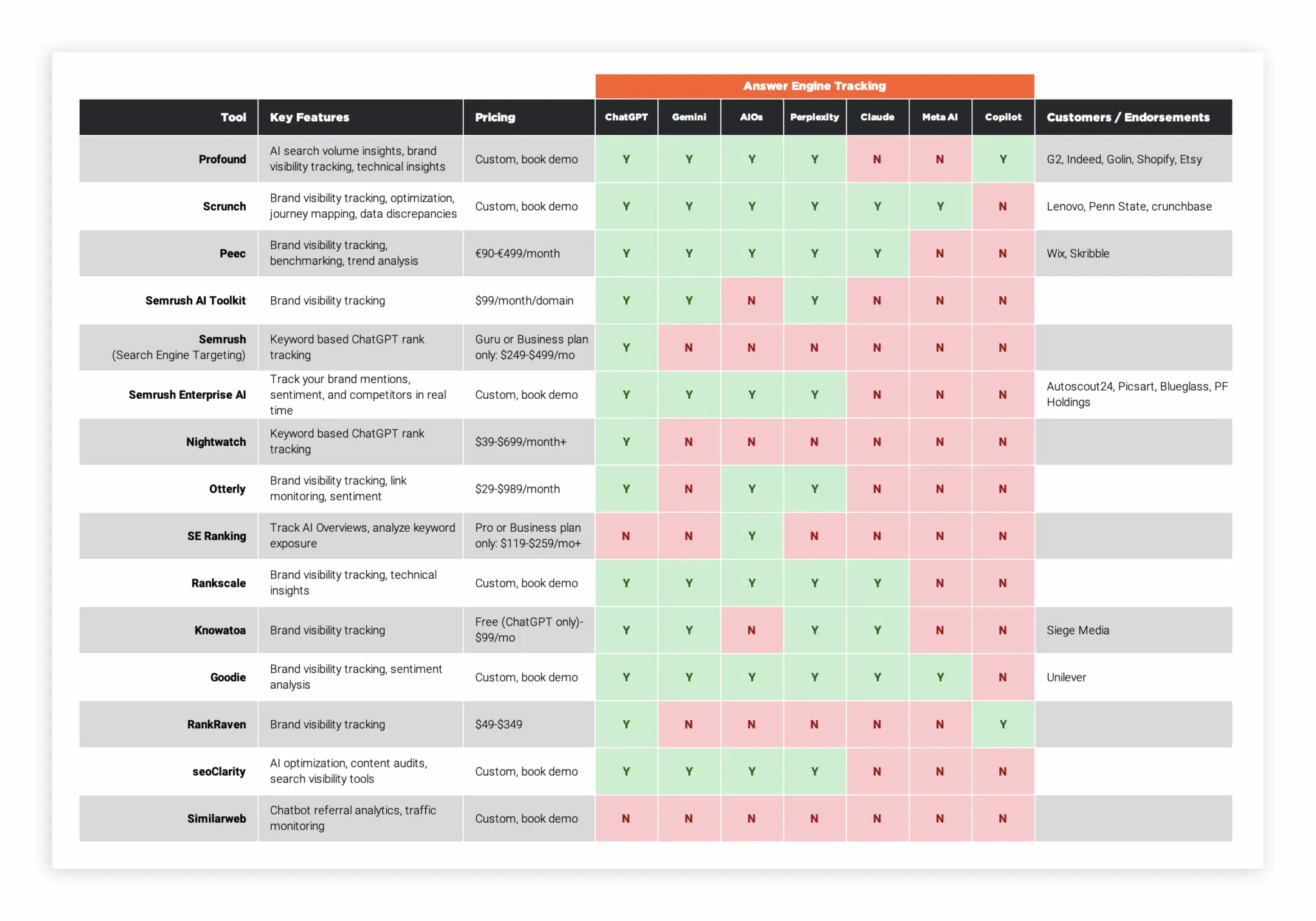

- Start with platform coverage breadth, not feature depth—ensure the tool monitors ChatGPT, Perplexity, Google AI Overviews, and Claude at minimum.

- Prioritize citation tracking over mention counting—links drive traffic; name-drops build awareness only.

- Demand query generation automation—manual prompt creation doesn't scale beyond 50 queries.

- Verify frontend monitoring capabilities—API-based testing often diverges from real user experiences significantly.

- Require actionable recommendations—monitoring without optimization guidance creates analysis paralysis.

- Validate enterprise security posture—SOC 2 Type II, SSO, and data residency options are non-negotiable for regulated industries.

Why Traditional SEO Tools Fail in AI Search Contexts

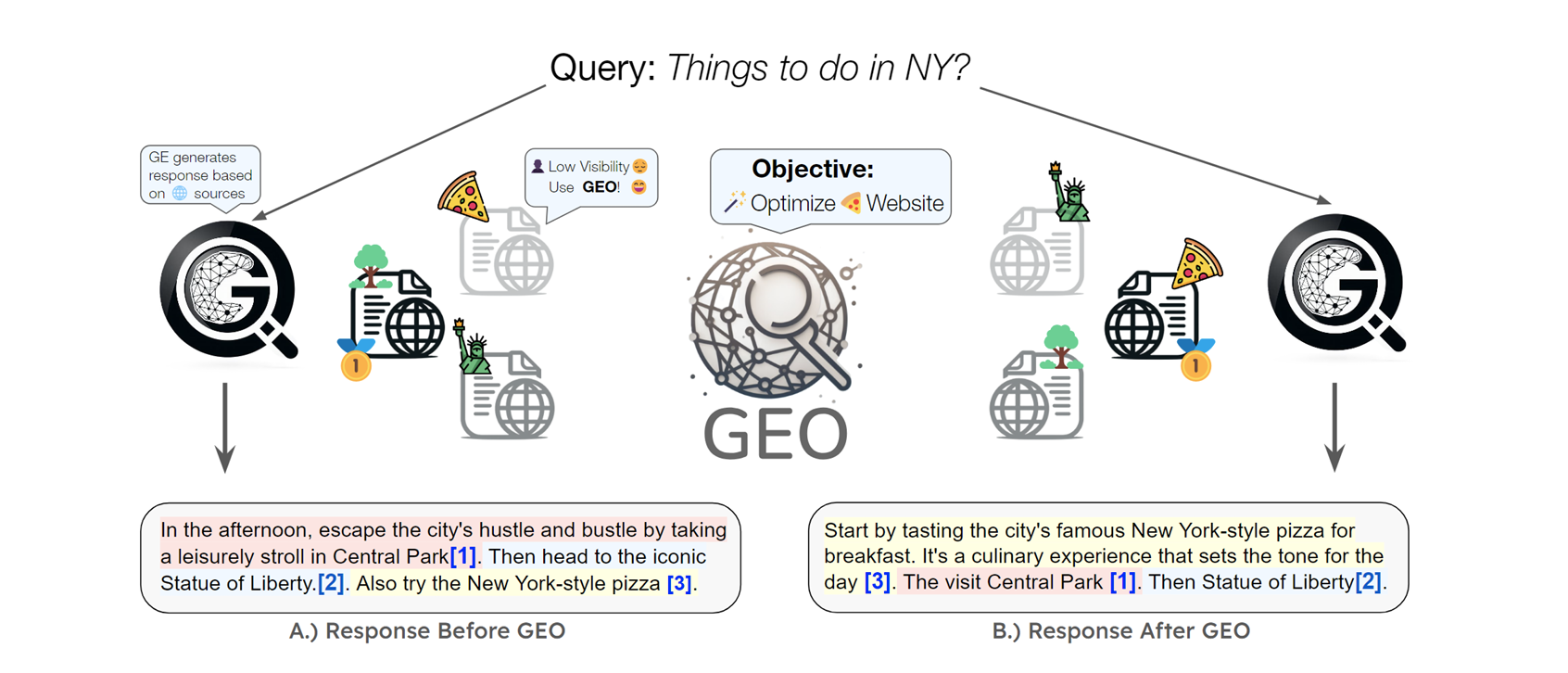

Before selecting new tooling, understand why your existing stack cannot adapt. Traditional rank trackers measure positions in linear SERPs—blue links ranked 1-10. AI search engines don't have rankings. They generate synthesized answers that blend multiple sources, cite selectively, and restructure information based on conversational context.

The technical disconnect:

- SERP trackers poll Google via API or proxy networks to capture position data. They expect consistent page structures.

- AI engines generate dynamic responses with no fixed positions to track. The same prompt produces different answers across sessions, locations, and user histories.

- Citation patterns differ fundamentally—AI systems may cite your homepage for one query and your documentation for another, with no predictable hierarchy.

This means your Ahrefs or Semrush subscription, while still valuable for traditional search, provides incomplete intelligence for AI-mediated discovery. You need ai search visibility tracking tools designed for generative response analysis, not positional ranking.

Step 1: Define Your Monitoring Scope and Objectives

What to do: Document 3-5 measurable outcomes before evaluating vendors. Vague goals like "improve AI presence" lead to tool mismatches and budget waste.

Why this matters: Different tools optimize for different use cases. Without clarity, you'll overpay for enterprise features you won't use or underinvest in capabilities critical to your workflow.

The specification framework:

- Visibility goals: "Appear in 60% of AI answers for our top 50 commercial queries" versus "Achieve top-3 recommendation status in ChatGPT for our core category"

- Protection priorities: "Reduce critical misinformation incidents in AI answers to near-zero" requires different tooling than share-of-voice tracking

- Attribution requirements: Whether you need direct pipeline connection or brand awareness measurement changes technical requirements significantly

Common mistake: Starting with vendor demos rather than internal requirements gathering. Teams that skip this step typically select tools based on interface polish rather than functional fit, leading to 6-12 month replacement cycles.

Step 2: Evaluate Platform Coverage Against Your Audience Reality

What to do: Map your ideal customer profile's AI search behavior, then verify tool coverage matches.

Why this matters: Platform usage varies dramatically by demographic and use case. B2B buyers researching enterprise software favor Perplexity for depth; consumers making quick purchases rely on Google AI Overviews. Monitoring the wrong engines wastes budget and creates false confidence.

The coverage hierarchy for 2026:

- ChatGPT: Largest global market share among chatbots; essential for B2C and mainstream B2B

- Google AI Overviews: Dominates high-intent commercial queries; correlates with 34.5% CTR drops for traditional top results

- Perplexity: 10M+ monthly active users; preferred by researchers and technical buyers

- Gemini/Copilot: Growing adoption via Android and Microsoft ecosystem integration

- Claude: Strong in developer and academic communities

Critical evaluation question: Does the tool monitor frontend interfaces (what users actually see) or rely solely on API responses? API monitoring often misses personalization effects, A/B testing variations, and real-time model updates that significantly impact visibility .

Dageno application: Dageno's monitoring infrastructure covers the full spectrum of AI search platforms including ChatGPT, Perplexity, Google AI Overviews, Gemini, Claude, Grok, and DeepSeek. Rather than limiting coverage to a single engine, the platform provides unified visibility tracking across all major generative interfaces, ensuring you don't miss audience segments based on platform preferences. This comprehensive coverage eliminates the need for multiple point solutions and the data fragmentation they create.

Step 3: Distinguish Mentions from Citations in Tracking Methodology

What to do: Verify that your selected ai search visibility tracking tools differentiate between brand name appearances and actual source citations with URLs.

Why this matters: Mentions build awareness; citations drive traffic and measurable ROI. Tools conflating these metrics obscure true performance. A brand mentioned frequently but rarely cited gains no direct traffic benefit while facing higher reputation risk if the AI mischaracterizes their offering.

The technical distinction:

- Mentions: Brand name appears in generated text without source attribution

- Citations: Brand name includes clickable link to your domain or explicit source reference

Evaluation test: Ask vendors to show you a sample report distinguishing these metrics. If they cannot provide clear separation—or if their "citation" tracking merely counts domain appearances without link verification—continue your search.

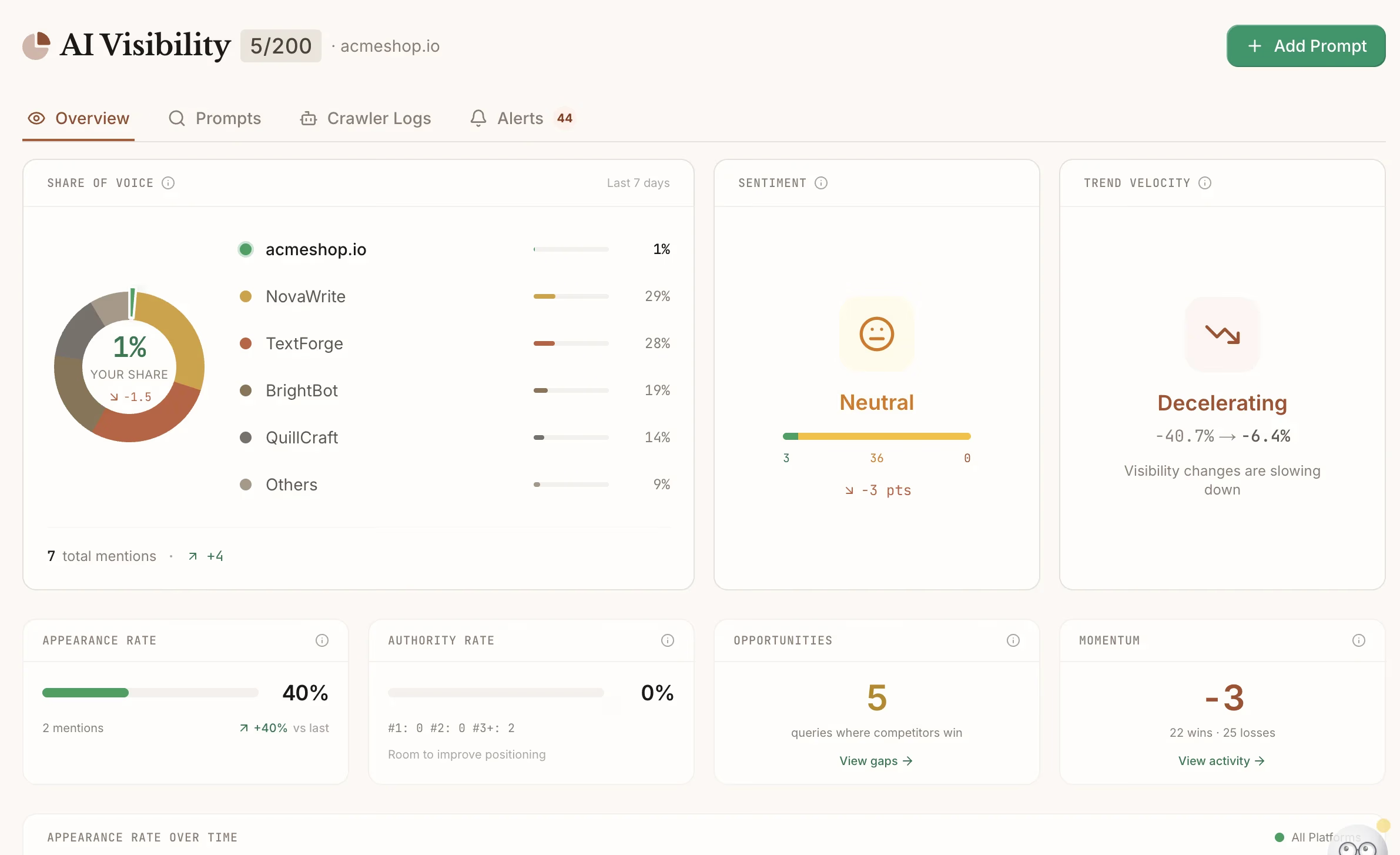

Dageno differentiation: Dageno's Answer Engine Insights module tracks both mention frequency and citation quality across all monitored platforms, providing the granular attribution data necessary for ROI calculation. The system distinguishes between passive references and active recommendations, enabling you to prioritize optimization efforts based on business impact rather than vanity metrics. This includes sentiment analysis to identify when AI systems characterize your brand positively versus negatively, and competitive benchmarking to show your share of voice relative to industry peers.

Step 4: Assess Query Generation and Prompt Intelligence Capabilities

What to do: Evaluate whether the tool automates prompt discovery or requires manual input for every query you want to track.

Why this matters: Manual prompt creation creates an operational bottleneck. Enterprise brands need to monitor hundreds or thousands of query variations. Tools without automated query generation force you to hire dedicated staff for prompt management—costs that often exceed the platform subscription.

The capability spectrum:

- Basic: Manual prompt entry only; suitable for <50 queries

- Intermediate: Keyword-to-prompt transformation (converts your SEO keywords into conversational queries)

- Advanced: AI-powered prompt discovery based on your content, competitor analysis, and trending topics in your category

Evaluation criteria: Ask vendors to demonstrate how their system would generate prompts for your specific industry. Generic examples indicate limited practical utility. The best tools ingest your existing content and automatically identify gaps between what you cover and what AI engines are being asked about.

Common mistake: Underestimating prompt volume needs. Teams typically require 3-5x more prompts than initially estimated to capture the full query landscape, including variations in phrasing, intent, and funnel stage .

Step 5: Validate Data Collection Methodology and Accuracy

What to do: Investigate how the tool collects and processes AI responses. Transparency here indicates technical maturity.

Why this matters: AI responses vary significantly based on timing, user context, and model updates. Tools using insufficient sampling provide unreliable snapshots rather than trendable data. You need confidence that visibility changes reflect actual performance shifts, not measurement noise.

The methodology checklist:

- Sampling frequency: Daily minimum; hourly for active campaigns

- Repetition strategy: Multiple runs per prompt to account for response variance

- Geographic distribution: IP-based localization if you operate in multiple markets

- Citation capture: Ability to track which specific URLs AI systems reference

- Historical baselines: Data retention for trend analysis (minimum 12 months)

Red flag: Vendors unwilling to explain their data collection methodology or claiming "proprietary algorithms" without technical detail. This often masks simple API polling that misses frontend variations.

Step 6: Require Actionable Recommendations Beyond Monitoring

What to do: Select tools that bridge the gap between measurement and optimization, not just dashboards showing problems.

Why this matters: Monitoring without guidance creates analysis paralysis. The best ai search visibility tracking tools identify why you're not appearing and what to fix—whether that's content gaps, technical barriers, or citation source authority issues.

The optimization hierarchy:

- Gap analysis: Identifies which queries you're missing entirely

- Content recommendations: Suggests specific topics, formats, or updates to improve inclusion

- Technical guidance: Flags schema markup, entity definition, or site architecture issues blocking AI comprehension

- Competitive intelligence: Shows which sources AI prefers for your target queries and why

Dageno integration: Dageno's content optimization module extends beyond tracking to provide specific, implementable recommendations. The system analyzes your existing content library against AI citation patterns, identifying which pages need expansion, which entities require clarification, and which topics present the highest opportunity for visibility gains. This closes the loop between monitoring and action—a critical requirement for teams without dedicated GEO specialists. The platform also includes topic discovery tools that identify emerging queries in your category before competitors capture them.

Step 7: Evaluate Enterprise Security and Compliance Posture

What to do: Verify security certifications and data governance capabilities match your organizational requirements.

Why this matters: AI visibility tools process your brand data, competitive intelligence, and potentially customer information. In regulated industries (finance, healthcare, legal), inadequate security posture creates compliance risk that outweighs any visibility benefits.

The non-negotiable checklist:

- SOC 2 Type II certification: Minimum standard for enterprise data handling

- SSO/SAML support: Essential for organizations with centralized identity management

- Role-based access controls (RBAC): Prevents unauthorized data exposure across teams

- Audit logging: Required for compliance documentation and security incident response

- Data residency options: Critical for organizations with geographic compliance requirements (GDPR, etc.)

SMB vs. Enterprise consideration: Smaller teams can sometimes tolerate less rigorous security for faster deployment. However, if you anticipate scaling beyond 50 users or operating in regulated industries, start with enterprise-grade security to avoid painful platform migrations later.

Step 8: Calculate Total Cost of Ownership, Not Just Subscription Fees

What to do: Model the full cost including implementation, training, integration development, and ongoing management.

Why this matters: The AI visibility tool market shows significant pricing variation, with industry averages around $337/month but enterprise tiers reaching $500-2000/month . Hidden costs in per-platform fees, query volume limits, and API access tiers can double the apparent subscription price.

The TCO framework:

- Base subscription: Monthly or annual platform fee

- Per-platform add-ons: Some tools charge extra for each AI engine monitored

- Query volume overages: Exceeding monthly prompt limits triggers automatic upgrades

- Integration costs: API access, webhook development, BI connector configuration

- Personnel time: Training, report generation, and optimization workflow management

Pricing tier alignment:

- Startups/Solo (≤$50/month): Basic monitoring for 1-2 brands, limited query volumes

- Mid-market ($79-149/month): Multi-engine coverage, automated recommendations, team collaboration features

- Enterprise ($295-499+/month): Unlimited queries, API access, advanced security, custom integrations

Dageno value proposition: Dageno's enterprise solution consolidates multiple capabilities—monitoring across 8+ AI platforms, content optimization, competitive intelligence, and traditional SEO tracking—into a single platform. This integration reduces the tool sprawl that drives up TCO through integration maintenance, data reconciliation, and training overhead. For organizations currently managing separate contracts for rank tracking, brand monitoring, and AI visibility, consolidation typically reduces total tooling costs by 30-40% while improving data coherence.

Step 9: Pilot Before Committing to Annual Contracts

What to do: Run parallel trials of 2-3 tools with identical query sets to compare data accuracy and usability.

Why this matters: Vendor demos showcase idealized scenarios. Real-world testing reveals data quality variations, UI friction, and workflow integration challenges that determine long-term adoption.

The 30-day pilot protocol:

- Define test queries: 50-100 prompts representing your core use cases (branded, commercial, informational)

- Establish baseline: Document current visibility across all test prompts before tool implementation

- Run parallel tracking: Test identical queries across 2-3 platforms simultaneously

- Evaluate consistency: Compare results for the same prompts across tools—significant divergence indicates methodology issues

- Test actionability: Attempt to implement one recommendation from each tool and measure implementation time

Success metrics: Data accuracy (correlation with manual checks), time-to-insight (how quickly you can generate actionable reports), and optimization velocity (speed of implementing recommendations).

Step 10: Integrate with Existing Workflows and Attribution Models

What to do: Connect AI visibility data to your existing marketing analytics stack for unified reporting.

Why this matters: AI search visibility doesn't exist in isolation. You need to correlate visibility changes with traffic, lead quality, and revenue to demonstrate ROI and justify continued investment.

Integration requirements:

- API access: For custom data pulls into your data warehouse

- BI connectors: Native integrations with Looker, Tableau, or similar platforms

- CRM connectivity: Attribution from AI visibility to pipeline and closed-won deals

- Export flexibility: CSV, JSON, or Parquet formats for custom analysis

Attribution modeling note: Traditional last-click attribution fails for AI search because users may see your brand in a ChatGPT response, then search Google for your brand name to visit your site. You need multi-touch models that credit AI visibility for assisted conversions.

Dageno implementation: Dageno's unified dashboard integrates AI visibility metrics with traditional SEO rankings, allowing you to track the relationship between generative search presence and organic traffic performance. This unified view prevents the siloed analysis that leads to misattribution and budget misallocation. The platform's Botsight Analytics feature specifically tracks how AI search visibility correlates with branded search volume and direct traffic, providing the attribution clarity needed for executive reporting.

FAQ

Q: What are ai search visibility tracking tools and how do they differ from traditional SEO platforms?

AI visibility tools monitor brand mentions and citations within generative AI responses across ChatGPT, Perplexity, Gemini, and other LLMs. Unlike traditional SEO trackers that measure keyword positions in SERPs, these tools analyze conversational answers for inclusion rates, sentiment, and source attribution. They address the shift from ranked links to synthesized recommendations where visibility means being cited as an authoritative source, not occupying position one .

Q: How do I know if my business needs a dedicated AI visibility platform versus using my existing SEO tool's AI features?

Evaluate your current tool's AI capabilities against the criteria in this guide. If your SEO platform only tracks Google AI Overviews without covering ChatGPT, Perplexity, or Claude—or if it provides mention counts without citation tracking—you have coverage gaps that dedicated ai search visibility tracking tools can fill. The decision hinges on whether AI-mediated discovery represents a significant traffic or reputation risk for your business.

Q: What technical requirements should I verify before purchasing an AI visibility tool?

Confirm the tool monitors frontend user interfaces rather than relying solely on API responses, as these often diverge significantly. Verify query generation automation capabilities, citation quality tracking (not just mention counting), and data export options for integration with your existing analytics stack. For enterprise deployments, require SOC 2 Type II certification and SSO support .

Q: How frequently should ai search visibility tracking tools update data to be useful?

Daily updates represent the minimum viable frequency for trend detection. Weekly updates suffice for stable, established brands in slow-moving industries. Hourly or real-time updates become necessary during product launches, PR crises, or active optimization campaigns when you need immediate feedback on changes. Verify whether your selected tool offers on-demand refreshes or only scheduled updates .

Q: Can AI visibility tools improve my rankings, or do they only monitor them?

Most tools focus on monitoring and analysis, but advanced platforms provide specific optimization recommendations. These range from content gap analysis (identifying topics AI engines associate with your category that you haven't covered) to technical guidance (schema markup improvements that increase citation likelihood). The most effective implementations combine monitoring with active content optimization workflows .

Conclusion: From Reactive Measurement to Strategic Advantage

The shift from traditional SEO to AI search visibility monitoring represents more than a tooling change—it requires rethinking how your brand creates value in AI-mediated discovery experiences. The framework outlined here moves you from reactive measurement (checking if you appear) to strategic optimization (systematically improving inclusion quality).

Why this approach works in the AI search era: As traditional search traffic declines and AI-first discovery grows from 13 million users (2023) to a projected 90 million by 2027, visibility in generative answers becomes a competitive necessity, not a nice-to-have . The tools you select today determine whether you capture this shift or cede ground to competitors who optimized earlier.

Specific next steps:

- Audit current visibility: Use HubSpot's free AI Search Grader or similar tools to establish baseline performance before investing in paid platforms

- Map your query landscape: Document the 50-100 most critical prompts your prospects ask AI systems about your category

- Pilot two tools: Run parallel trials with identical query sets to validate data accuracy and workflow fit

- Connect to outcomes: Integrate visibility data with your CRM and analytics to establish attribution models that demonstrate ROI

The brands winning in AI search aren't necessarily the largest—they're the ones who understood earliest that visibility in AI-generated answers requires intentional, systematic optimization supported by the right ai search visibility tracking tools.

About the Author

Updated by

Ye Faye

Ye Faye is an SEO and AI growth executive with extensive experience spanning leading SEO service providers and high-growth AI companies, bringing a rare blend of search intelligence and AI product expertise. As a former Marketing Operations Director, he has led cross-functional, data-driven initiatives that improve go-to-market execution, accelerate scalable growth, and elevate marketing effectiveness. He focuses on Generative Engine Optimization (GEO), helping organizations adapt their content and visibility strategies for generative search and AI-driven discovery, and strengthening authoritative presence across platforms such as ChatGPT and Perplexity